From License Purchase to Landed Value: The Flight Plan Playbook for Copilot Adoption

Two numbers from Gartner, sitting side by side, tell you everything about where enterprise AI actually stands right now.

- By 2026, more than 80% of enterprises will have used generative AI, APIs, or deployed GenAI-enabled applications, up from less than 5% in 2023.

- And yet: fewer than 30% of CEOs are satisfied with the returns on their AI investment, despite an average spend of $1.9M per organization in 2024.

Let that sink in for a second. We’re seeing adoption everywhere, but the real, lasting value is much harder to find. Gartner now puts generative AI firmly in the "Trough of Disillusionment" on its 2025 AI Hype Cycle—not because the tech itself is broken, but because organizations keep struggling to transform rollout into meaningful, sustainable impact.

This is not a Copilot problem. This is a what-happens-after-you-buy-the-license problem. And after running Copilot engagements across airlines, manufacturers, foundations, and financial services firms over the past year, I’ve come to believe it’s the most urgent, most solvable challenge in enterprise AI right now.

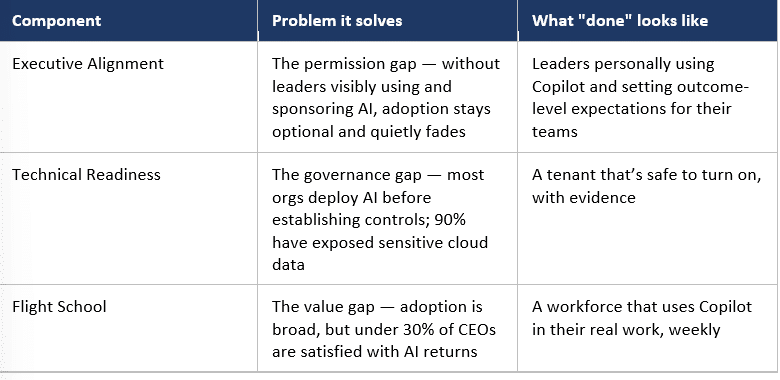

Specifically, it’s three problems stitched together — a permission gap at the top, a governance gap in the middle, and a value gap at the edge. Every Flight Plan we run is built around those three components, in that order. That’s what this post is about.

1. It Starts at the Top: Executive Alignment

Here’s what I’ve seen across every Copilot engagement I’ve led: the single biggest predictor of whether AI takes root in an organization isn’t the size of the license, the maturity of the tenant, or the sophistication of the training program. It’s whether executives and senior leaders personally use the tool, talk about using it, and expect their teams to do the same.

AI adoption is a drip-down effect. When leaders give permission and show excitement for AI, permission and excitement drip down through the organization. It becomes okay, then expected, to try Copilot on a board pre-read, a customer email, a hiring debrief. When leaders don’t, even the best-designed enablement program feels optional. People wait to see whether this is a real priority or another initiative that will quietly fade. They read the signal fast, and they read it right.

Gartner’s less than 30% CEO satisfaction number is partly a story about this disconnect. Many CEOs signed the PO but didn’t sign up to personally change how they work and the rest of the organization took its cue from that. Which is why Executive Alignment is the Flight Plan component I used to treat as an assumption and now treat as a deliverable. It looks like:

- A CEO or business unit leader who demonstrably uses Copilot: in their prep, their communication, their decision-making. Not a demo; real work.

- A clearly articulated "why": what this AI investment is meant to change for the business, the customer, or the employee experience, stated by leadership in their own words.

- Sponsorship tied to outcomes, not activations: leaders who ask, "What decisions did we make better this quarter?" not "How many licenses did we turn on?"

- Public permission to experiment — and to be wrong sometimes: modeled from the top. Cultures where AI gets quietly used in the shadows don’t scale. Cultures where leaders openly say, "I tried Copilot on this, here’s what worked, here’s what didn’t" do.

Without this, the other two components can still produce moments of value but not durable, organization-wide change. The drip-down is real; it just has to drip from somewhere.

2. The Governance Gap: Technical Readiness

Executive Alignment is necessary but not sufficient. The second pattern stops AI programs before they even reach the adoption question. It’s the reason some organizations stall between pilot and production.

IDC finds that 65% of organizations are actively using AI today. But AuditBoard’s 2025 research on AI governance found that only 25% of organizations have a fully implemented AI governance program. The rest have policies drafted, in development, or nothing at all. Adoption is running ahead of the controls needed to scale it safely, which is why so many organizations stall between pilot and production: not for lack of licenses, but for lack of data access controls, data quality, and trust.

The underlying telemetry tells you why. Varonis analyzed 1,000 real IT environments for their 2025 State of Data Security Report — actual data, not self-reported surveys — and found that 90% of organizations have exposed sensitive cloud data. Their broader synthesis put it more starkly: 99% of organizations have sensitive data dangerously exposed to AI tools.

Copilot doesn’t create this exposure. It inherits whatever permissions, sharing links, and sensitivity labels already exist in your tenant. The problem is that most tenants have accumulated a decade of "I’ll clean it up later." Tenant-wide "Everyone except external users" grants. Inherited permissions nobody audited. Sensitivity labels that were configured but never enforced. Sites shared in 2018 with a group that dissolved in 2022.

Before Copilot, this oversharing was technically retrievable but practically buried. Copilot makes it retrievable in seconds through a natural-language prompt. An employee in Accounting types, "Summarize our executive compensation discussions" and suddenly discovers a SharePoint library they were never supposed to find.

This is where the Technical Readiness component of a Flight Plan exists and why it isn’t optional.

A proper readiness motion is unglamorous, methodical work:

- Discovery and exposure mapping: tenant-wide audit of sharing, permissions, and ghost users

- Quick-win remediation: remove the worst offenders before any user is licensed

- Sensitivity labeling at scale: auto-classification, not manual tagging

- Restricted SharePoint search and DLP for Copilot: narrow the retrieval surface to what the user should actually find

- Continuous governance: because your data estate keeps moving

It’s not exciting. But it’s the difference between a Copilot program that expands and one that gets paused, descoped, or quietly canceled.

3. The Value Gap: Why "We Bought Copilot" Isn’t a Strategy

When Microsoft 365 Copilot first hit general availability, a lot of leadership teams treated it the way they’d treat a license upgrade. Procurement ran the PO. IT assigned licenses. Marketing sent a welcome email. And then… crickets.

The under-30% CEO satisfaction number is what happens next. People try Copilot once. They get an answer that’s almost, but not quite, what they needed. They don’t know how to prompt it well. They aren’t sure which of their use cases it’s actually good at. The tool sits in their sidebar, unused, a reminder of a budget line item that didn’t quite land.

This is where the Flight School component of a Stoneridge Flight Plan lives.

Flight School isn’t a webinar. It isn’t a one-hour lunch-and-learn. It’s a structured, cohort-based enablement program where your people in their roles, on their actual work learn to get real value out of Copilot.

We run it with:

- Role-specific prompt libraries: so a controller, a project manager, and a sales director all leave with prompts that apply to their next Tuesday.

- Champion networks: peer-to-peer learning across the organization, because IT pushing adoption from the outside doesn’t scale. People change how they work when someone at a neighboring desk shows them how.

- Rhythm and repetition: weekly office hours, monthly use-case shares, quarterly measurement. Adoption is a practice, not an event.

The orgs that move from "we deployed it" to "it’s how we work here" are not the ones with bigger budgets. They’re the ones who treat enablement as seriously as they treated the procurement.

The Flight Plan: Three Components, One Outcome

A Flight Plan is, at its simplest, these three components stitched together into one engagement:

No single component produces durable value on its own. Executive Alignment without Technical Readiness creates excited leaders pointing at data their employees shouldn’t see. Technical Readiness without Flight School produces a safe tenant full of dormant licenses. Flight School without Executive Alignment delivers enthusiastic practitioners stranded by leaders who never quite followed through.

Together, they get you landed value — the thing your CFO signed the PO expecting.

Where I’m Placing My Bet

I’ll be honest: I have a personal mission built into this work. I want to see that under-30% CEO satisfaction number climb toward something worth putting in a board deck. Not as a vanity metric — as a proxy for whether AI investments actually change how organizations work.

Every Flight Plan we run is a small test of whether that’s possible. So far, the answer is yes, but only when all three components are in place and when leadership treats the engagement as a change initiative rather than a software rollout.

If you’ve bought Microsoft 365 Copilot and you’re staring at low adoption, data exposure anxiety, or both — that’s the conversation I want to have. The tools are better than they’ve ever been. The gap between license and value is a solvable problem.

Let’s close it.

Under the terms of this license, you are authorized to share and redistribute the content across various mediums, subject to adherence to the specified conditions: you must provide proper attribution to Stoneridge as the original creator in a manner that does not imply their endorsement of your use, the material is to be utilized solely for non-commercial purposes, and alterations, modifications, or derivative works based on the original material are strictly prohibited.

Responsibility rests with the licensee to ensure that their use of the material does not violate any other rights.